Welcome to PyNNLF¶

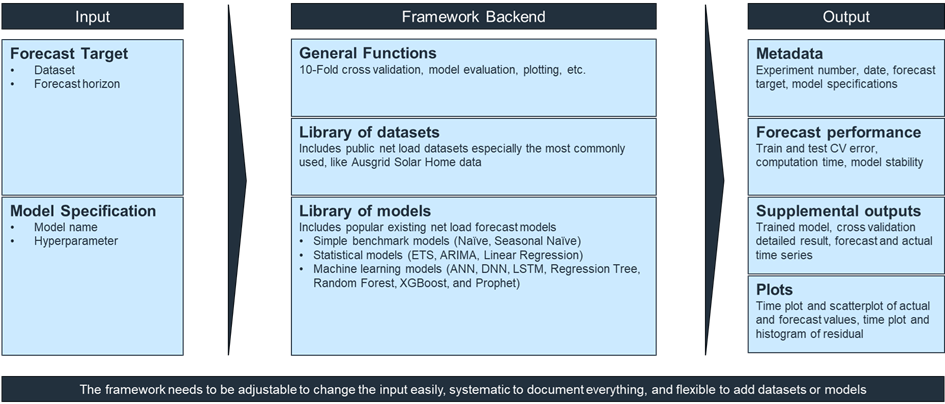

PyNNLF (Python for Network Net Load Forecast) is a tool to evaluate net load forecasting model performance in a reliable and reproducible way.

You can access the GitHub repository here.

Objective¶

This tool evaluates net load forecasting models reliably and reproducibly. It includes a library of public net load datasets and common forecasting models, including simple benchmark models. Users define the forecast problem and model specification in a YAML spec and run experiments through the Python package.

It also allows users to add datasets, models, and modify hyperparameters. Researchers claiming a new or superior model can compare their model with existing ones on public datasets. The target audience includes researchers in academia or industry focused on evaluating and optimizing net load forecasting models.

For worked guidance, see How to add a model and How to modify model hyperparameter.

A visual illustration of the tool workflow is shown below.

Input¶

- Forecast Target: dataset and forecast horizon defined in

example_project/specs/experiment.yaml. - Model Specification: model and hyperparameters defined in

example_project/specs/experiment.yaml.

Output¶

a1_experiment_result.csv- Contains accuracy (cross-validated test n-RMSE), stability (accuracy stddev), training time, and the run seed.a2_hyperparameter.csv- Lists effective hyperparameters used for each model.a3_cross_validation_result.csv- Detailed results for each cross-validation split.E00001_cv1_plots/- Optional plot folder for the first CV fold when plot generation is enabled.cv_test/andcv_train/- Folders containing time series of observation, forecast, and residuals for each cross-validation split.experiment_result/a1_experiment_result.csv- Optional recap across multiple experiments generated byrecap_experiments.

To skip plot generation and save storage, pass plot_enabled=False to run_experiment(...), run_experiment_batch(...), or run_tests(...).

Tool Output Naming Convention¶

Format:

[experiment_no]_[experiment_date]_[dataset]_[forecast_horizon]_[model]_[hyperparameter]

Example:

E00001_250915_ds0_fh30_m6_lr_hp1